Why physics-grounded, interpretable models outperform black-box ML for operational decision-making — and how to compose them as dynamical subsystems inside an AI and simulation platform.

Where AI meets simulation.

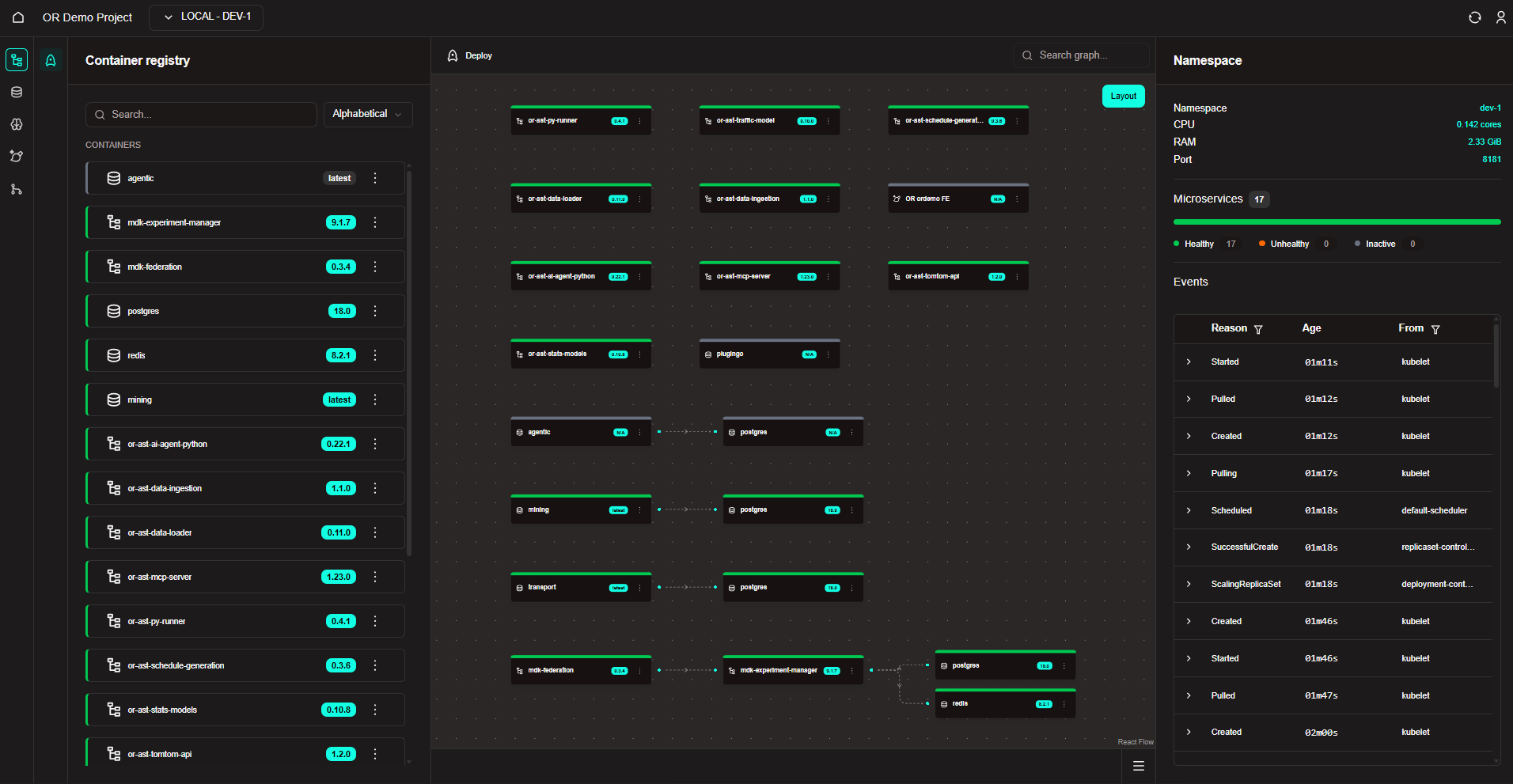

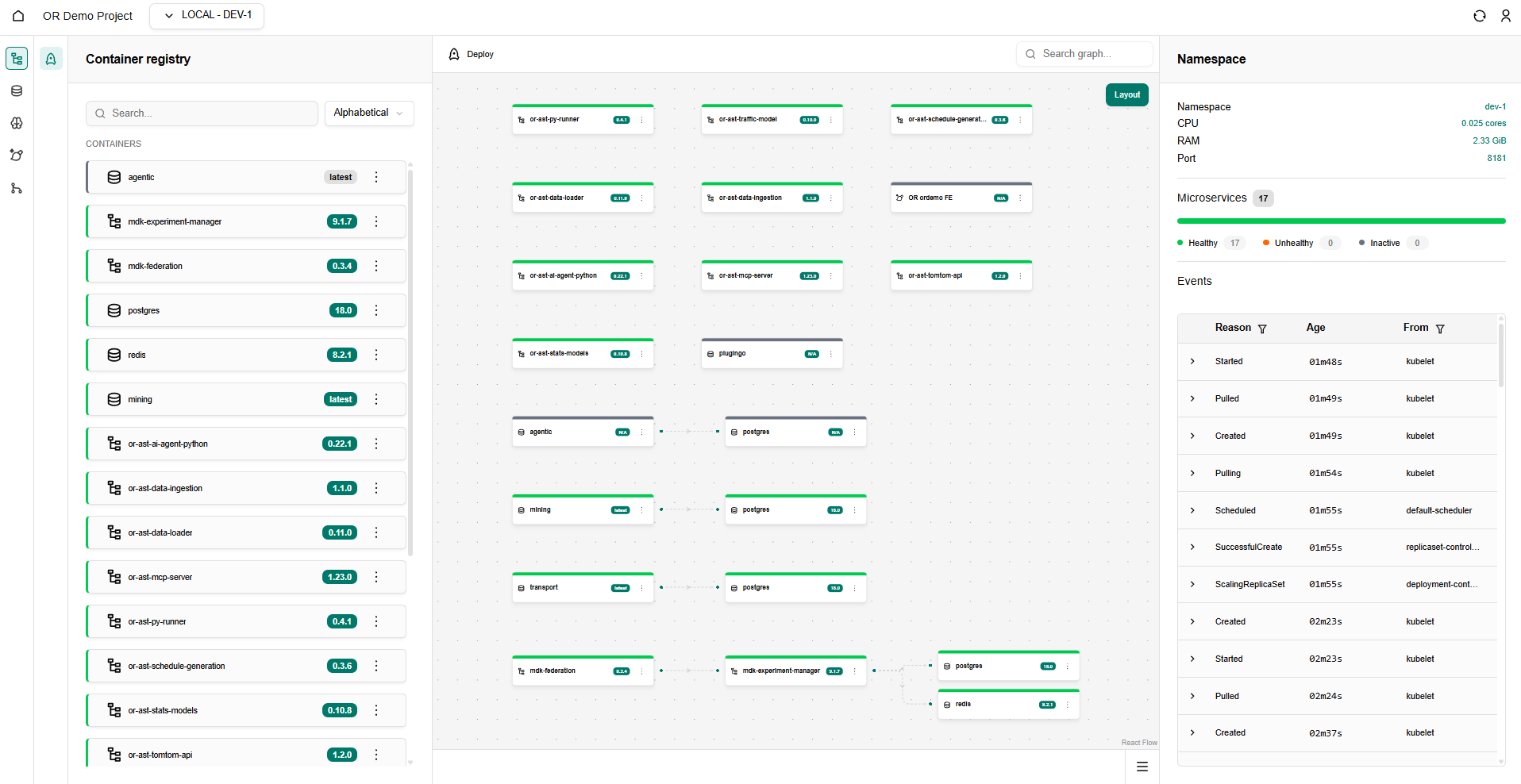

Optimal Reality is the AI and simulation platform for building and deploying intelligent applications — from physics-grounded models to runtime-personalised interfaces.

- Defence

- Transport

- Energy

- Critical Infrastructure

- Robotics

Every connected system. One operating reality.

Optimal Reality is the substrate beneath the world's connected things — unifying the systems that live, move and work into a single, decision-ready model of reality.

Homes, buildings, cities, utilities, health, energy — instrumented and responsive.

Transport, fleets, skies, space — coordinated across modes and operators.

Factories, mining, agriculture, supply chain — optimised with physics-grounded models.

Sensors → models → simulations → operators — in one stack.

Data Development Kit

Define schemas, ingest streams, and make real-world data first-class in your autonomy stack.

MDKModel Development Kit

Train, swap and deploy models — from perception to control — with full visibility into how they perform.

FDKFrontend Development Kit

Build operator-facing applications and dashboards on top of live autonomy data — without rewriting plumbing.

SCDKScenario Development Kit

Author the synthetic worlds your systems need to learn in — with reproducibility and edge-case coverage built in.

Connect & Digitize

Sensor feeds, telemetry and live signals — unified.

Store & Structure

Real-world data made queryable and useful.

Segment & Understand

Raw streams turned into reasoned signals.

Simulate & Train

Validate behaviour synthetically before the real world.

Deploy & Manage

Roll out, monitor and govern at scale.

Edge Inference

Run intelligence where the action is.

Workflow fine-tuning & smarter task connections

This release focuses on giving you tighter control over autonomous workflow behaviour and reducing the friction in wiring tasks together.

- New Workflow parameter fine-tuning panel — dial in thresholds and tolerances directly from the FDK.

- Improved Smarter task input/output mapping; fewer manual connections required.

- Improved Run comparison view now supports side-by-side diffing of metric outputs.

- Fix Resolved an edge case where streaming subscriptions could drop on long-running sessions.

Live data streaming & map customisation

Real-time data is now a first-class citizen, and the geospatial map view can be tailored to your operational environment.

- New Live data streaming — react to sensor and telemetry feeds as they arrive.

- New Map customisation: custom layers, terrain overlays and asset tracking.

- Improved AI cost tracking surfaces inference spend per project and per workflow.

Custom function builder & state management

Build reusable logic without leaving the platform, and keep tighter tabs on every running process.

- New Custom function builder — author and reuse functions across projects.

- New Granular access controls with per-project role assignments.

- Improved State management surfaces the lifecycle of every autonomous process.

Synthetic data templates & swappable models

- New Synthetic data templates for common autonomy scenarios.

- New Swappable AI models — plug in perception, planning or control modules without rewiring.

- Improved Smarter monitoring & alerts with automatic anomaly flagging.

Ready to ship real-world autonomy?

We work with teams building the next generation of physical AI — from robotics startups to enterprise operations.